The Dark Secret of Artificial Intelligence: The Black Box Problem

Artificial intelligence has transformed nearly every industry in the world. Today, AI systems help diagnose diseases, recommend products, optimize traffic, detect fraud, and even assist in legal decisions. Yet one of the biggest challenges hidden beneath the surface of this powerful technology is what researchers call the black box problem. Although AI can deliver remarkable results, the process by which it makes those decisions is often opaque, inscrutable, and inaccessible to human understanding.

This lack of interpretability creates a paradox. We are increasingly dependent on AI for critical decisions, but we often do not know why the technology makes the conclusions it does. The consequences are real: legal challenges, ethical dilemmas, and even safety risks can arise when AI decisions cannot be explained. To use AI responsibly, it is crucial to understand the black box problem, its causes, real-world implications, and how modern research and policy approaches attempt to address it.

What Is the Black Box AI Problem?

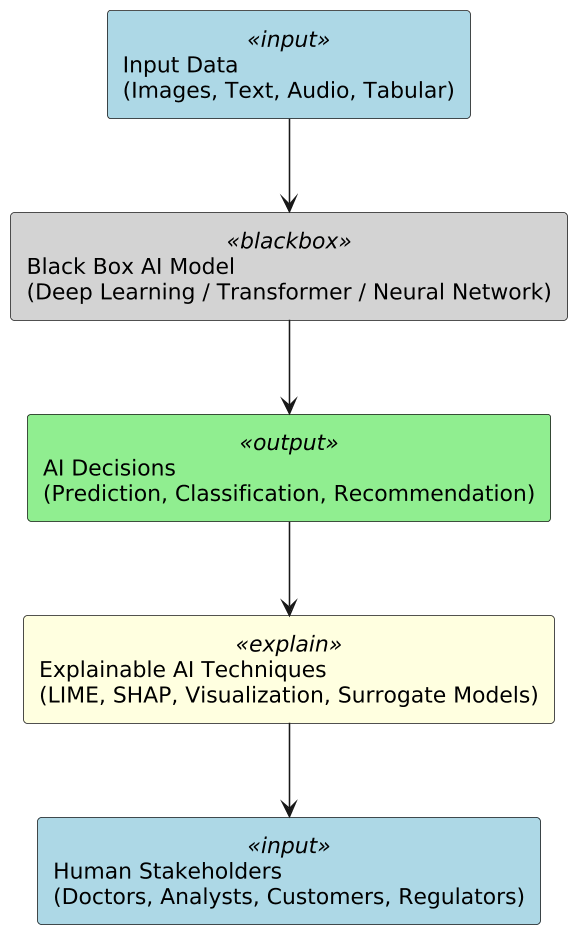

The black box problem refers to situations where an AI model’s inner workings — particularly how it arrives at specific decisions — are not easily interpretable by humans. Most modern AI systems are built using complex neural networks, especially deep learning models, that learn from large data inputs. Unlike simpler, rule‑based algorithms, these networks do not provide clear, human‑readable reasoning for their decisions.

For example, a deep learning model used to diagnose a medical image may output “positive” or “negative” for a disease, but clinicians may not understand which specific features of the image led to that conclusion. This problem occurs because many AI models represent information in distributed patterns of weights and activations across thousands or millions of parameters, rather than in symbolic rules or logic that humans can easily interpret.

This lack of transparency is not just theoretical. It directly impacts usability, accountability, safety, and trust in AI systems — especially when the decisions affect people’s lives.

Why the Black Box Problem Matters

Understanding AI decisions is not just an academic concern. It has practical importance in several key areas:

1. Ethical and Fairness Issues:

If an AI system cannot explain how decisions are made, it can inadvertently propagate bias. For example, algorithms trained on historical data may encode societal prejudices against certain groups. Without interpretability, identifying and correcting these biases becomes difficult.

2. Regulatory Compliance:

In many jurisdictions, automated decisions that affect individuals — such as credit approval or medical recommendations — are subject to rules requiring transparency and explainability. Regulations like the European Union’s General Data Protection Regulation (GDPR) include the “right to explanation,” meaning individuals can request understandable reasoning behind algorithmic decisions: https://gdpr.eu/.

3. Safety and Accountability:

In safety‑critical systems like autonomous vehicles or medical AI, understanding why a system made a certain decision can be vital for diagnosing failures, improving performance, or establishing accountability.

4. User Trust and Adoption:

Users are more likely to adopt AI systems when they can understand how decisions are made. Transparency fosters confidence, while opacity breeds mistrust and hesitation.

Why Black Boxes Happen: The Technical Roots

The black box problem arises from several technical and architectural features of modern AI:

Complex Model Structures:

Deep learning models, such as convolutional neural networks (CNNs) or transformer architectures (used in systems like TensorFlow and PyTorch), are designed to discover intricate patterns in data. Their internal representations are mathematically powerful but not inherently aligned with human logic or reasoning.

High Dimensionality:

AI systems often operate on data with thousands of features — for example, pixel values in an image or word embeddings in text. The interactions between these features can be too complex to trace back to simple rules.

Nonlinear Transformations:

Neural networks perform nonlinear transformations of inputs through multiple layers, creating representations that are not easily reducible to simple cause‑effect explanations.

Distributed Representations:

Rather than making decisions based on a few identifiable rules, deep neural networks distribute learned representations across many parameters, making the decision path diffuse and difficult to trace.

Real-World Examples of the Black Box Problem

Healthcare Diagnostics

AI systems are now used to analyze medical images for early detection of conditions like cancer. However, if a model misclassifies an image, doctors need to understand why the decision was made for diagnosis confidence and patient safety. Without explainability, verifying the model’s logic is challenging and risky. Researchers working on AI in healthcare often emphasize explainability as a core requirement for clinical adoption (see https://www.who.int/health-topics/artificial-intelligence).

Credit Scoring and Financial Decisions

Banks and lenders use AI models to assess credit risk. When a loan application is denied, individuals and regulators demand justification. Without a transparent decision path, lenders risk legal challenges and reputational harm. Regulatory bodies increasingly require explainable credit decisioning.

Autonomous Driving

Self‑driving cars process sensor data through complex deep neural networks to navigate roads. When accidents occur, investigators must understand what the vehicle’s AI “saw” and how it responded. Black box systems make this audit trail difficult to reconstruct.

Approaches to Reduce AI Opacity

As AI systems become more complex, developers and researchers have created several practical approaches to make AI decisions more transparent. These approaches allow humans to interpret, audit, and trust AI outputs, especially in high-stakes domains like healthcare, finance, and autonomous systems. Here are the key strategies currently in use:

1. Interpretable Models by Design

One of the simplest approaches is to use inherently interpretable models such as decision trees, linear regression, or rule-based algorithms. These models allow users to trace exactly how inputs affect outputs. While they may not match the predictive power of deep neural networks, they are suitable for applications where transparency is critical, like credit scoring or regulatory compliance.

2. Post-hoc Explanation Techniques

For complex models, post-hoc methods help explain decisions after the fact. Popular open-source tools include:

- LIME (Local Interpretable Model-agnostic Explanations): Explains individual predictions by approximating complex models locally with simpler, interpretable models (GitHub link).

- SHAP (SHapley Additive exPlanations): Quantifies feature contributions to predictions using game-theory-based Shapley values, providing global and local interpretability (GitHub link).

- AI Explainability 360 by IBM: Offers a comprehensive suite of algorithms for global and local explanation, bias detection, and fairness analysis (Official link).

These tools help analysts understand why an AI model made a specific decision without modifying the original model.

3. Feature Importance and Visualization

Visualizing the most influential features allows stakeholders to see what drives model predictions. Techniques like heatmaps for images, attention maps in NLP, or bar charts for tabular data provide intuitive explanations. For example, in medical imaging, heatmaps can highlight regions of an X-ray that influenced a diagnosis, enabling doctors to validate AI decisions.

4. Model Distillation and Surrogate Models

Complex AI models can be approximated with simpler, interpretable models through a process called model distillation. For instance, a large neural network can be “distilled” into a smaller decision tree that mirrors its behavior. While some accuracy may be sacrificed, it provides a transparent proxy that humans can audit and understand.

5. Causal Analysis and Counterfactual Explanations

Instead of merely showing correlations, modern explainability methods focus on cause-effect relationships. Counterfactual explanations answer questions like, “What would need to change for the AI to make a different decision?” This approach is highly valuable in scenarios such as loan approvals, where users want actionable insights on improving their outcomes.

6. Human-in-the-Loop Systems

Integrating humans into the AI decision-making loop ensures oversight and accountability. Experts can review AI outputs, verify explanations, and intervene when necessary. Platforms like H2O Driverless AI combine automated AI with interpretability features to allow users to monitor, tweak, and validate model behavior.

7. Transparent Data and Process Documentation

Explainability is not just about the model — it also depends on clear documentation of training data, preprocessing steps, feature selection, and known biases. Keeping comprehensive records ensures that AI predictions can be traced back to their sources, making audits and compliance easier.

How Regulation Is Addressing the Black Box Problem

Policy makers worldwide recognize that opacity in AI poses significant risks. Regulatory approaches range from voluntary transparency guidelines to mandatory explainability in certain circumstances:

- European Union – GDPR: Offers individuals a right to explanation for automated decisions affecting them (https://gdpr.eu).

- AI Ethics Frameworks: Various governmental and non‑profit organizations publish AI ethics principles emphasizing transparency, fairness, and accountability.

These regulations not only protect users but also steer industry practices toward more interpretable AI systems.

The Future of AI Transparency

The black box problem may never disappear entirely — some level of complexity comes with high‑performance AI. However, the future of responsible AI depends on Hybrid systems that combine high accuracy with interpretable logic. Areas of research and development include:

- Causal AI: Understanding causal relationships rather than correlations.

- Human‑in‑the‑Loop Systems: Designing AI that works alongside humans, where humans can override or query decisions.

- Standardized Explanation Protocols: Creating industry‑wide norms for how AI decisions should be explained.

Together, these trends shape a future where AI is powerful and trustworthy.

Conclusion

The black box problem in artificial intelligence remains one of the technology’s darkest secrets — not because it is hidden deliberately, but because it arises naturally from complexity. As AI systems become more capable, they also become more difficult to interpret.

Yet the solution is not to abandon powerful models but to pair them with mechanisms that allow humans to understand and trust them. Whether through explainable AI techniques, regulatory frameworks, or better design practices, the push for transparency is essential for responsible AI.

Understanding and addressing the black box problem is not just a technical challenge — it’s a step toward ensuring that AI enhances human well‑being without sacrificing accountability, fairness, or ethical integrity.